Overview

This scientific project investigates how different levels of transparency in AI-supported system suggestions impact efficiency, accuracy, user satisfaction, and trust in form-based workflows. I conducted a mixed-method research approach, combining qualitative interviews, a focus group, and a controlled user study, to design and evaluate multiple concepts. The results identify simple confidence indicators as the most effective solution, enabling faster, more accurate decisions while maintaining user trust.

Role

UX Researcher & Designer

Scope

User Research, UX Concept, UI Design, UX Evaluation

Platform

Web

Duration

~ 6 months

Tools

Adobe XD

balsamiq

LimeSurvey

SPSS

Miro

Citavi

Microsoft 365

balsamiq

LimeSurvey

SPSS

Miro

Citavi

Microsoft 365

Context

In B2B invoicing workflows, accuracy is critical. At the same time, tasks are repetitive and time consuming, with users reporting up to 45 minutes per invoice due to long forms and recurring inputs, creating clear potential for intelligent assistance.

However, automated suggestions do not reduce responsibility. Users remain fully accountable and validate every input, leading to re-checking behavior, hesitation, and low trust, especially when system decisions lack clarity.

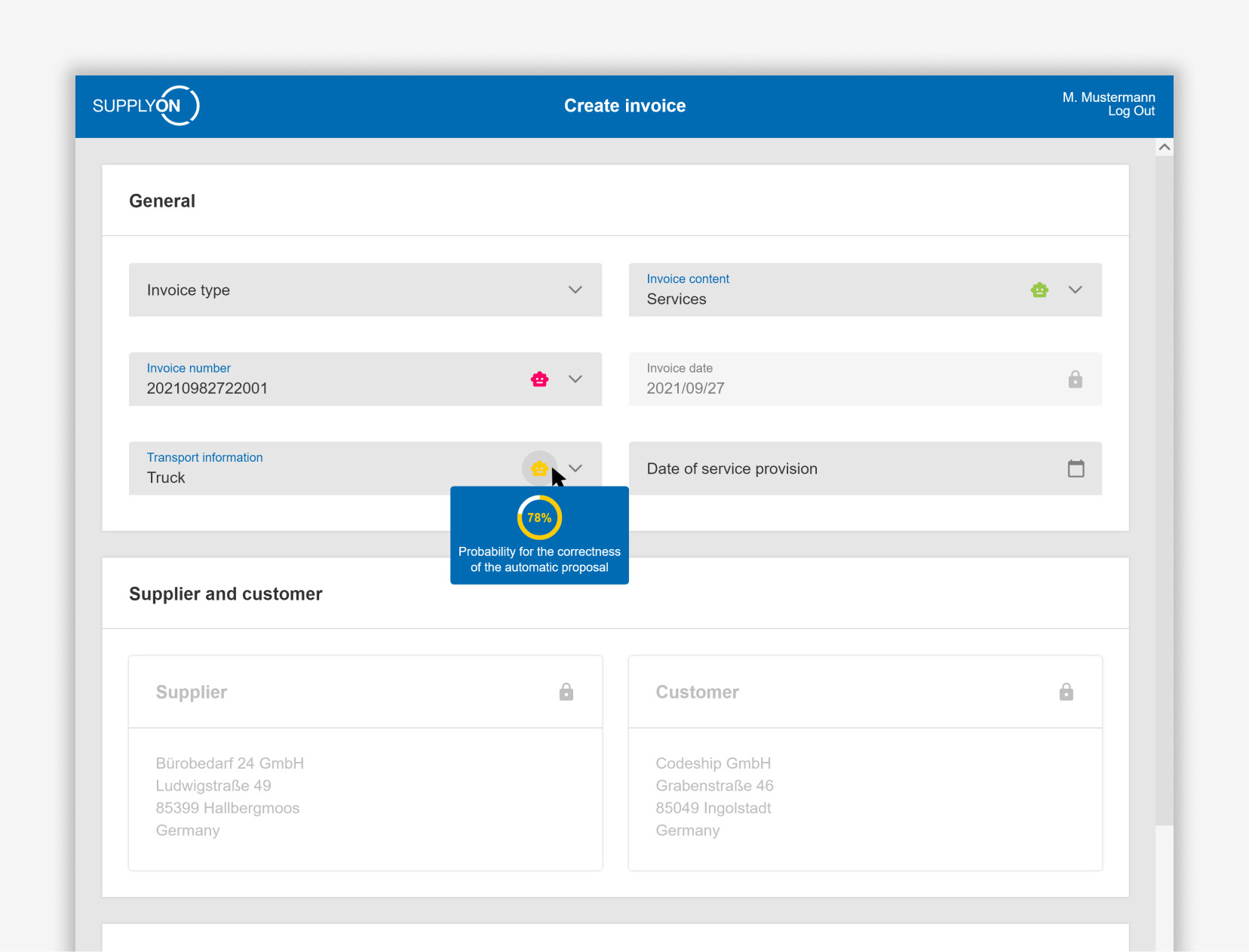

This project focuses on how AI-supported suggestions should be presented to enable fast, confident validation without compromising user control. It was conducted in collaboration with SupplyOn, using their eInvoicing platform as the application context.

UCD Approach

The project followed a user-centered design process, combining qualitative exploration with controlled evaluation. A method triangulation approach was applied across pre-studies and a main study. Insights informed design principles and guided the development of concept variants.

Prestudies

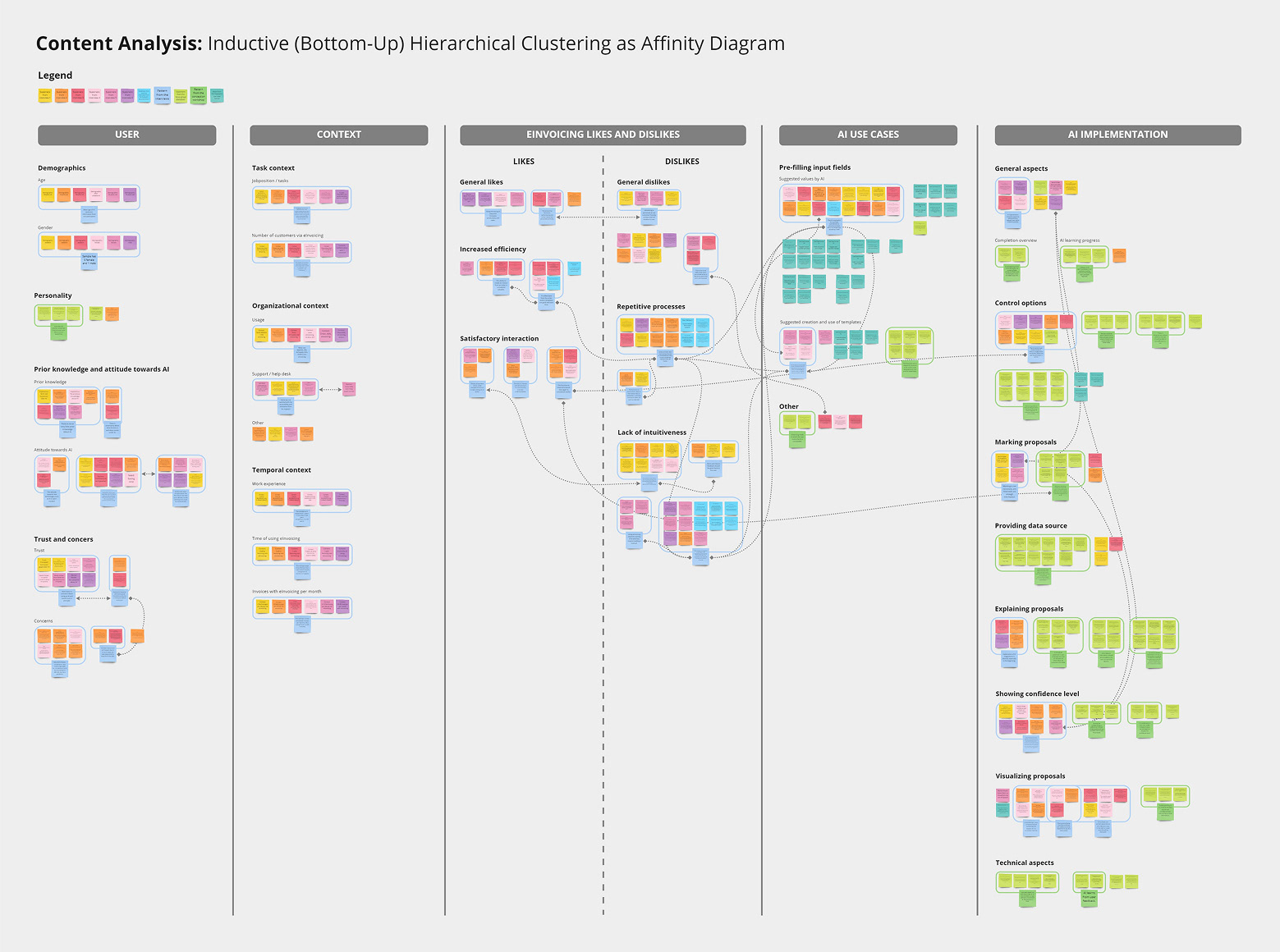

Qualitative pre-studies, including user interviews and a focus group with domain experts, explored workflows and expectations. Data was structured through hierarchical affinity clustering and further analyzed using a narrative approach.

Users operate in a high-responsibility context and consistently verify all inputs: “[It] does not release me from my duty to check everything.” At the same time, repetitive patterns reveal automation potential, as “some information can be calculated automatically […] we have always the same payment terms.”

A key requirement is the ability to distinguish between system suggestions and reliable data: “[I need to] distinguish between stored data and AI proposals […] then I know where to pay attention.” This led to a central insight: users do not need to understand the system, they need to quickly assess its output.

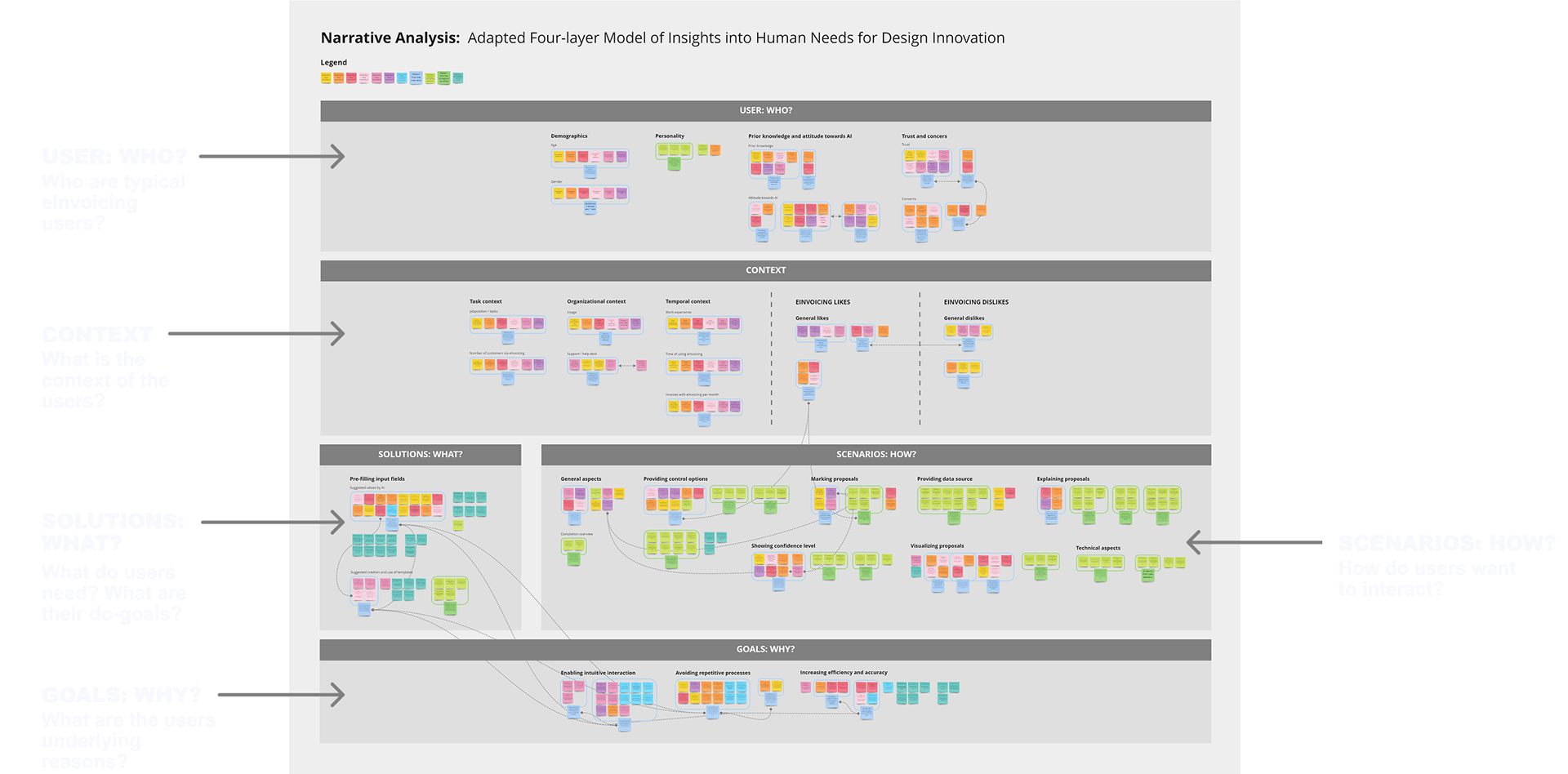

Content analysis, visualized as affinity diagram with a total of 376 codes in 61 patterns, clustered into 5 themes. Connection lines mark relationships between patterns or codes. Per subject a different sticky note color was used (for the focus group discussion one color).

Narrative analysis adapted from the Four-Layer Model of Insights into Human Needs for Design Innovation

Concepts

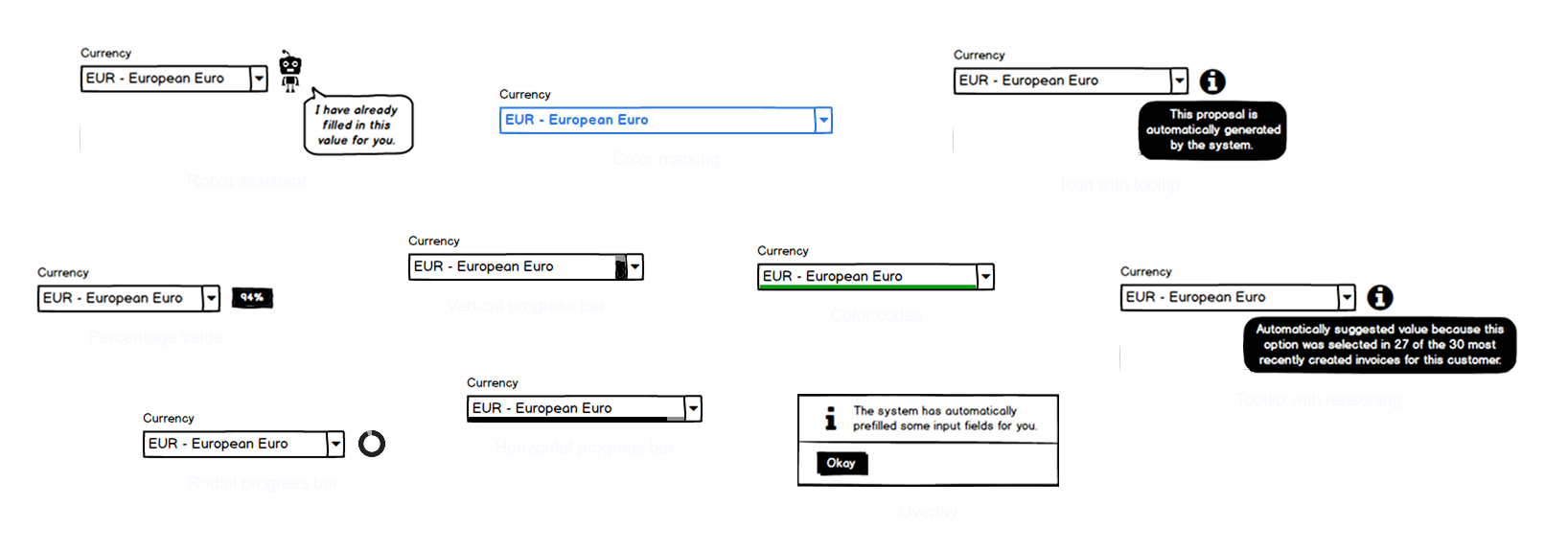

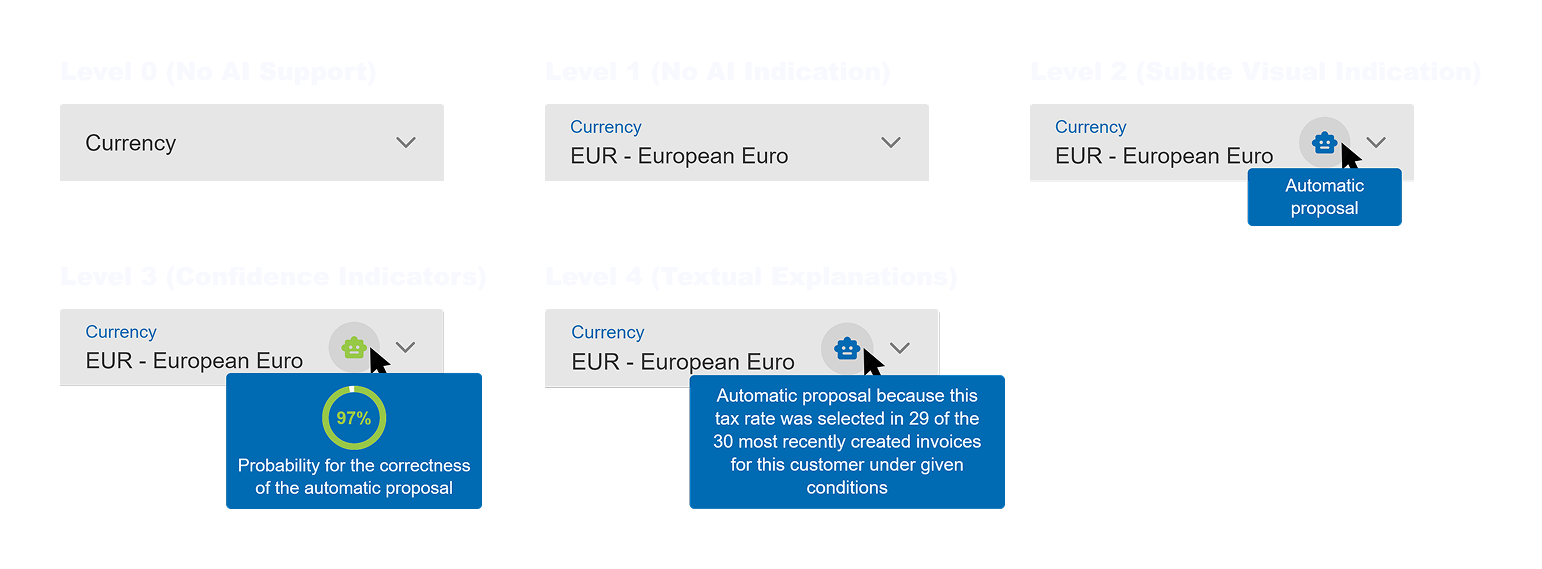

Five levels of transparency were defined to explore how system suggestions should be communicated, ranging from no feedback to subtle cues, confidence indicators, and textual explanations.

Across these levels, multiple concept variants were designed based on the same interaction: pre-filled inputs reviewed and validated by the user. Concepts were developed iteratively with domain experts, evolving from wireframes to clickable high-fidelity concepts.

Ideas as wireframes

High-fidelity concepts: Tooltips are shown when hovering the icon

Evaluation

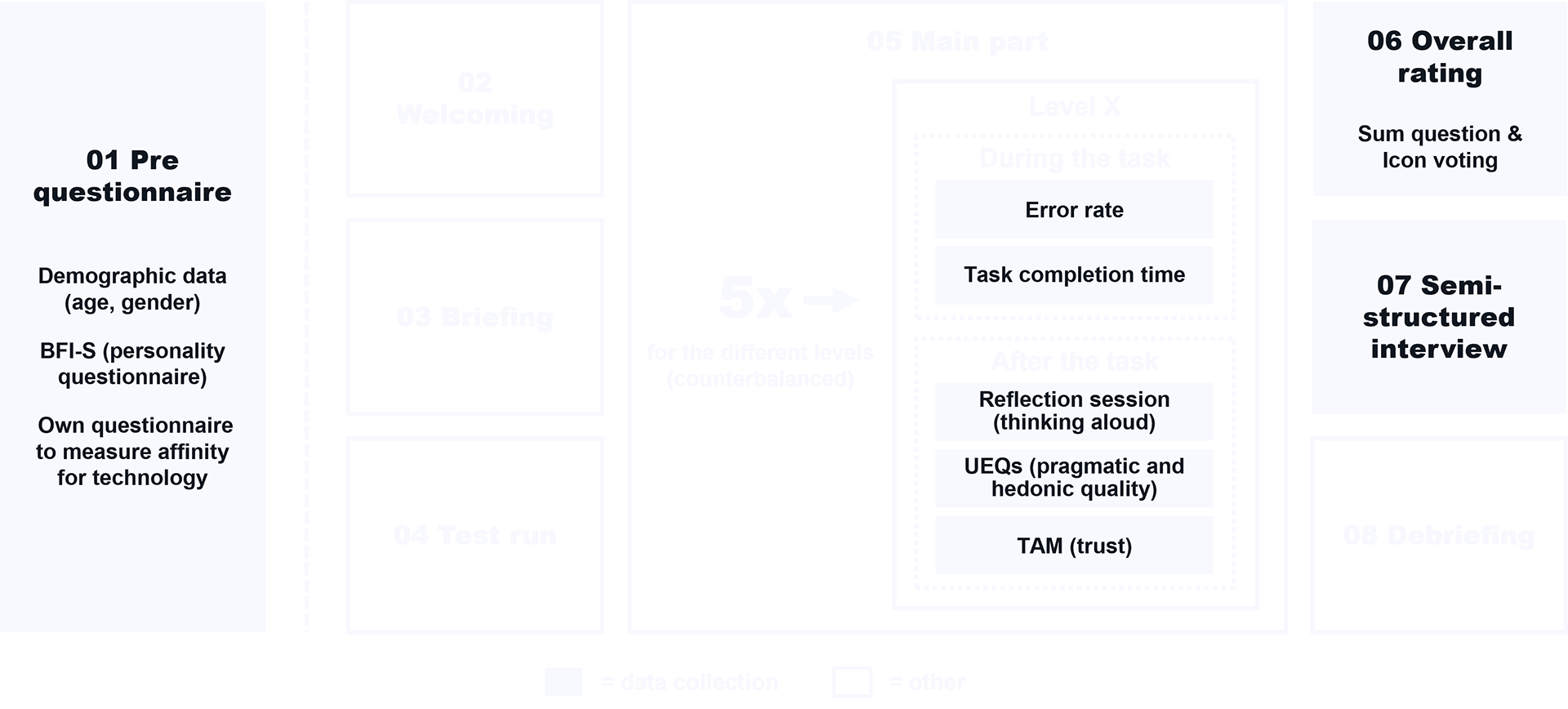

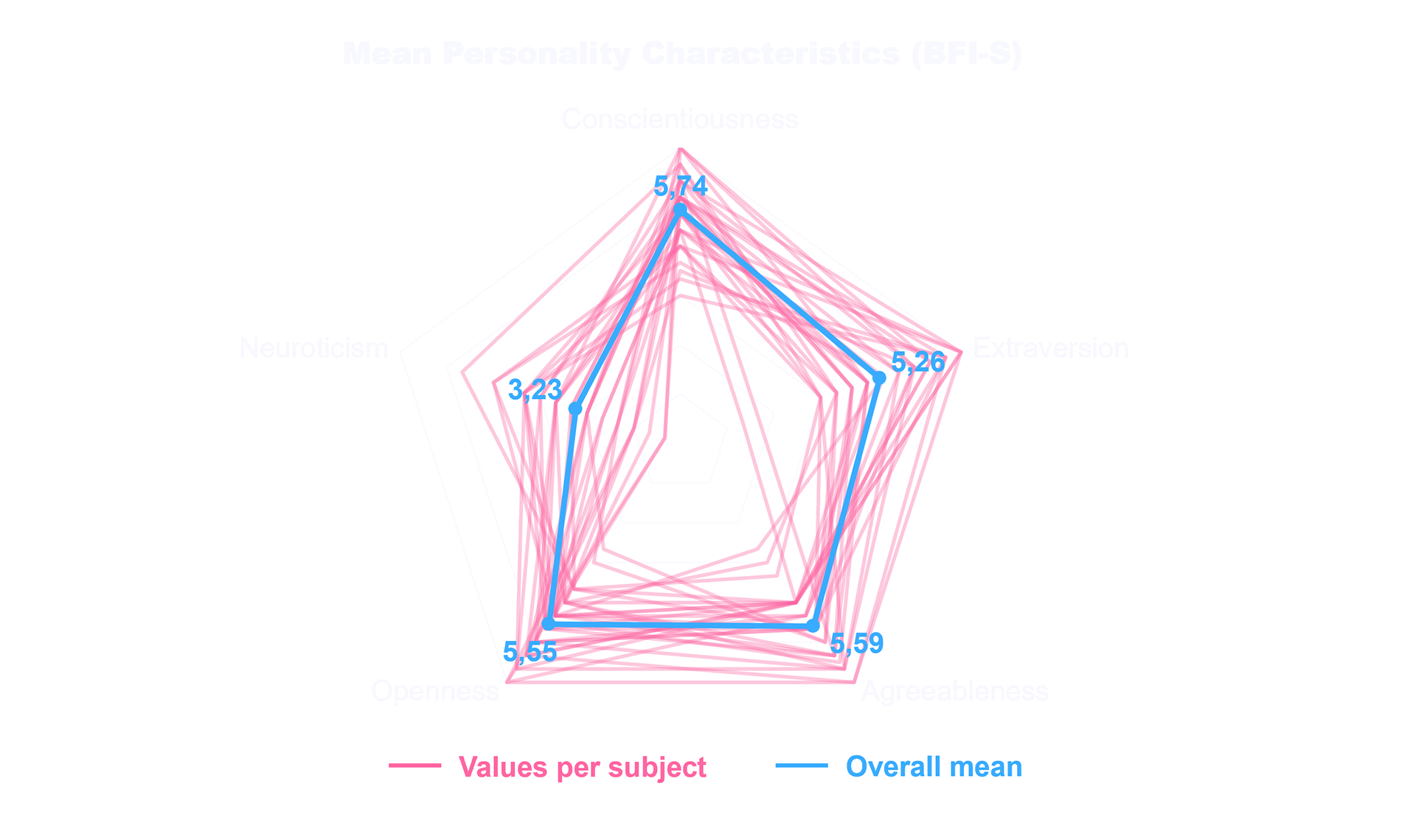

A controlled within-subject user study (n=30) was conducted using the interactive prototype. Participants completed realistic invoicing tasks across different concept variants.

Performance was measured through task completion time and error rate, complemented by user satisfaction, perceived trust, and overall attractiveness. Qualitative feedback provided additional insights.

Study procedure

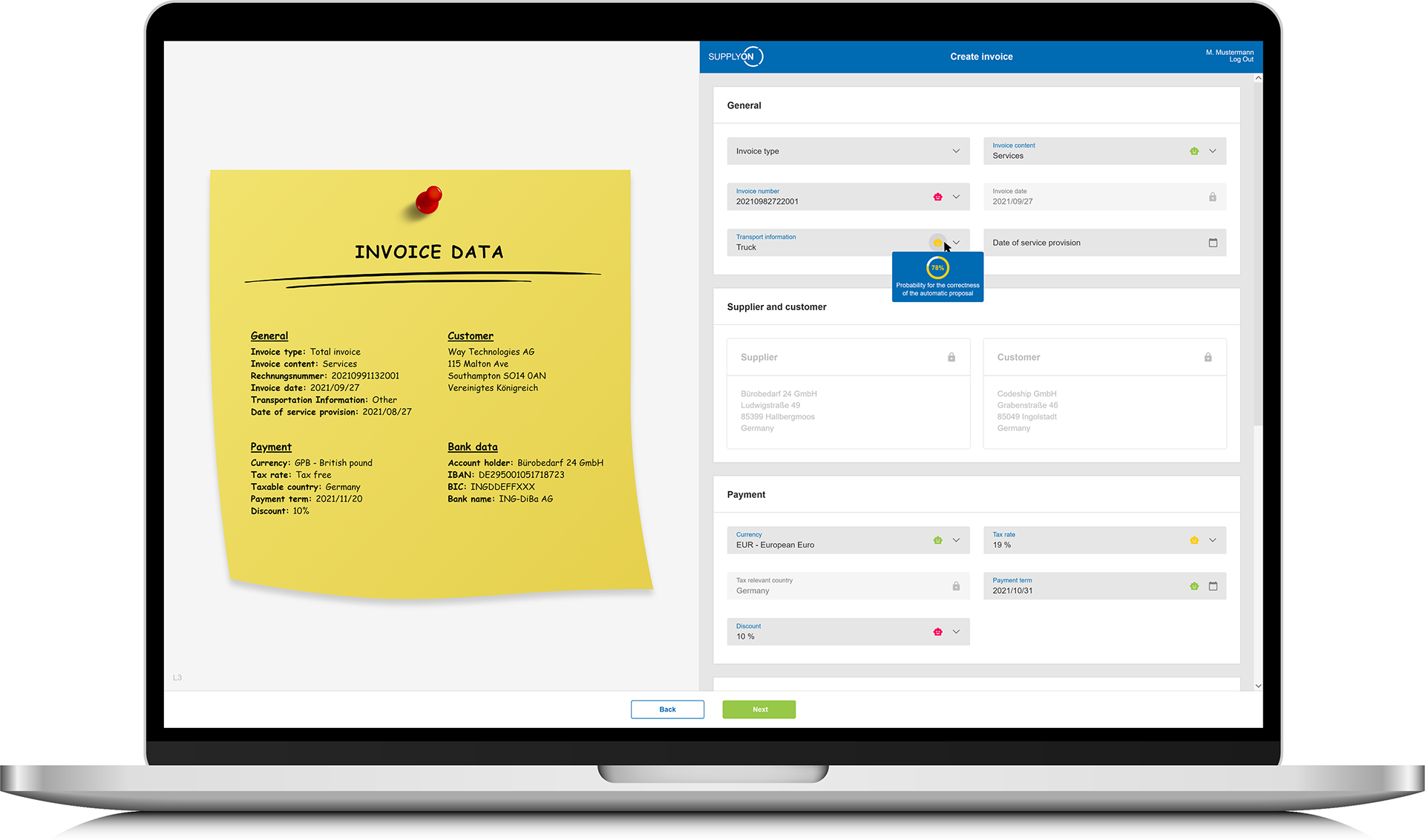

Study prototype: invoice data (left) entered into an interactive form (right, Level 3), with AI pre-filled fields.

Results

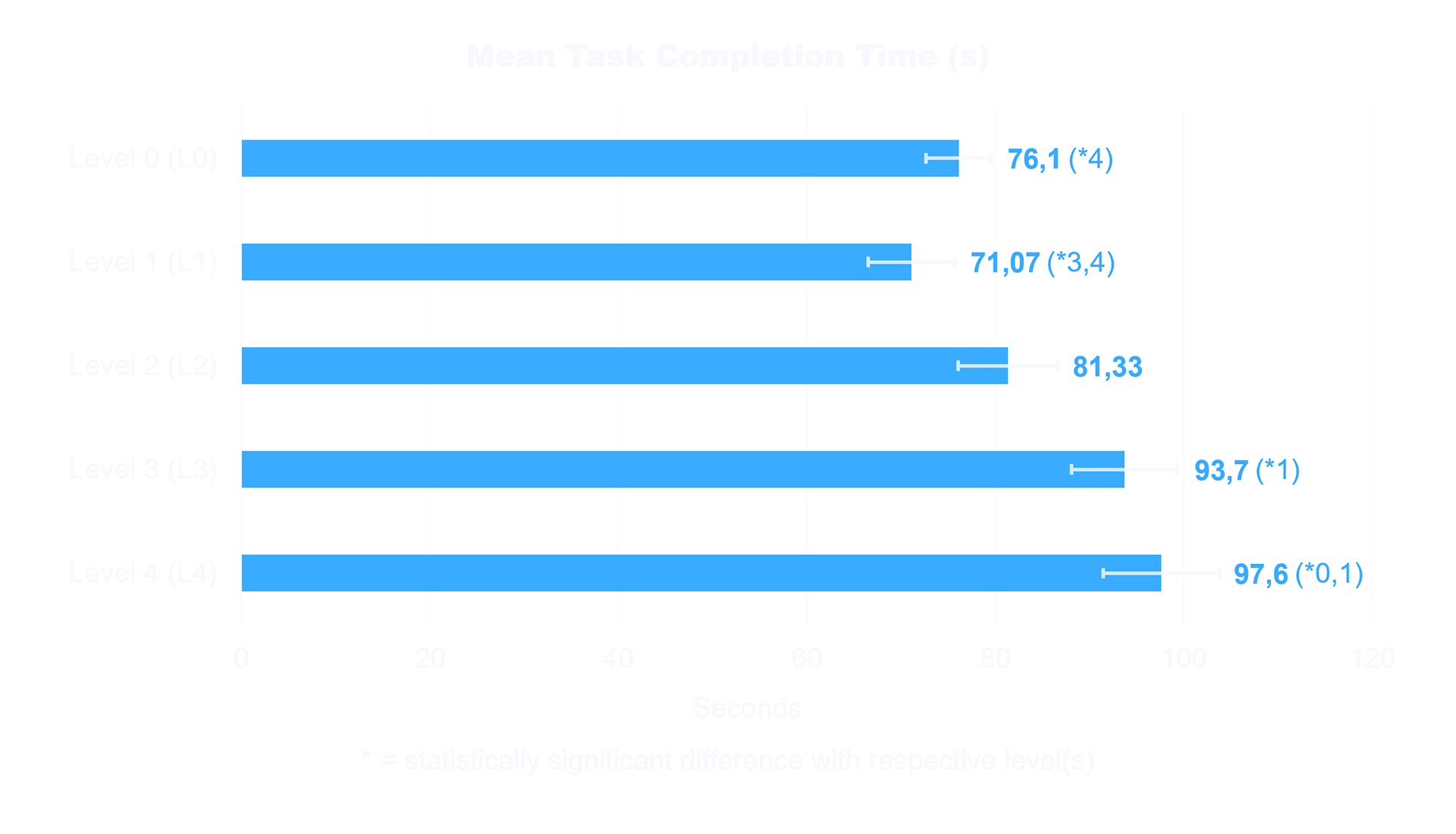

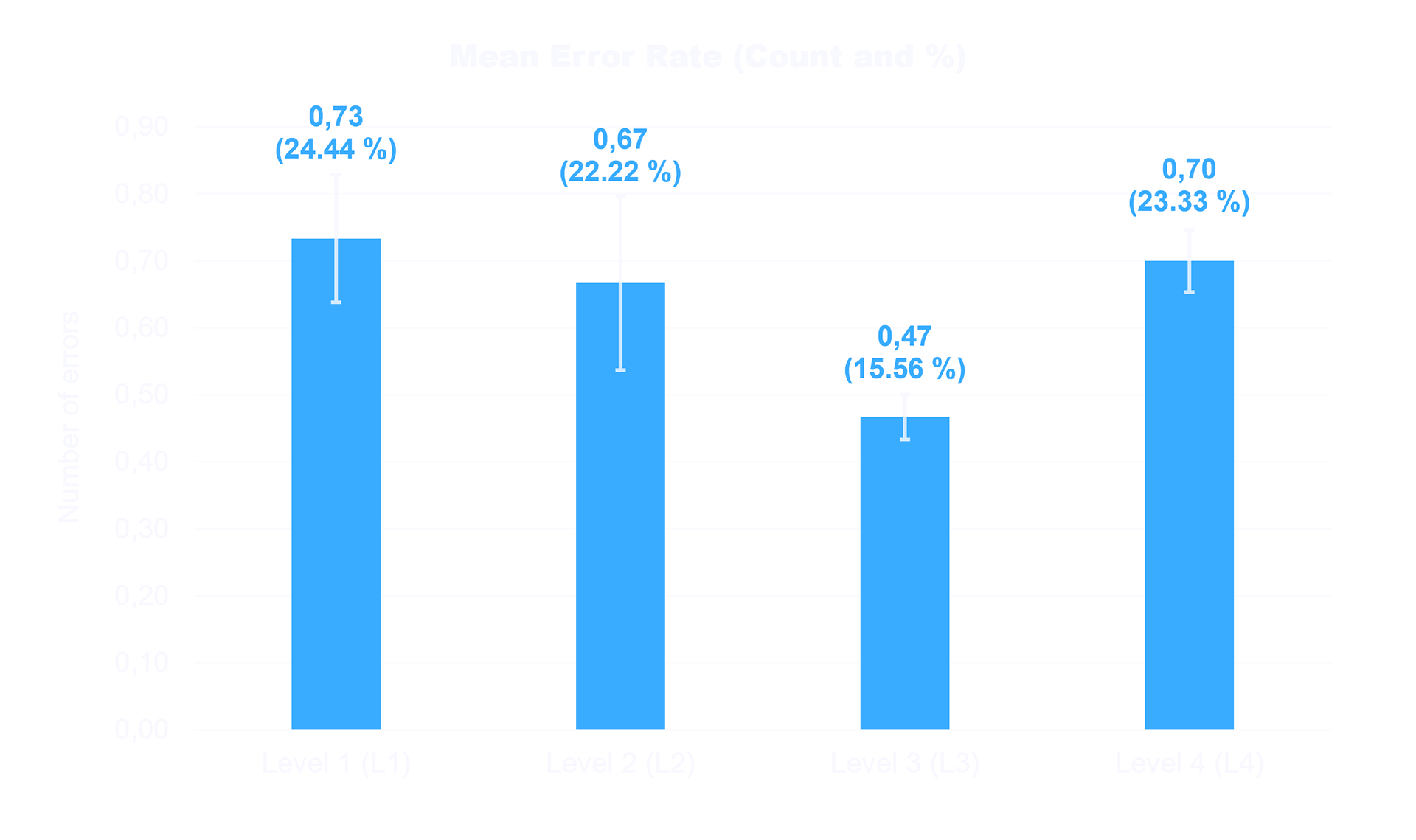

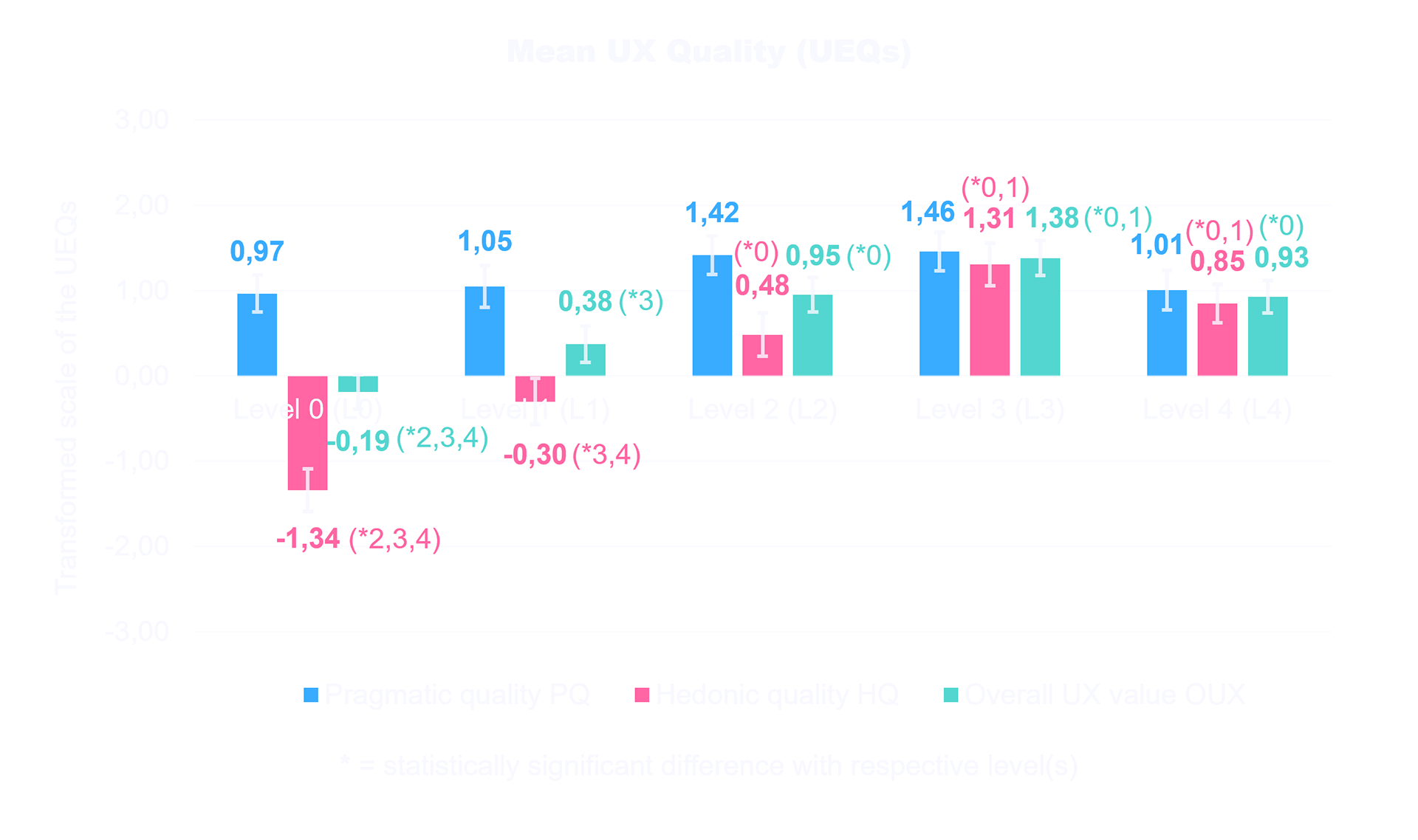

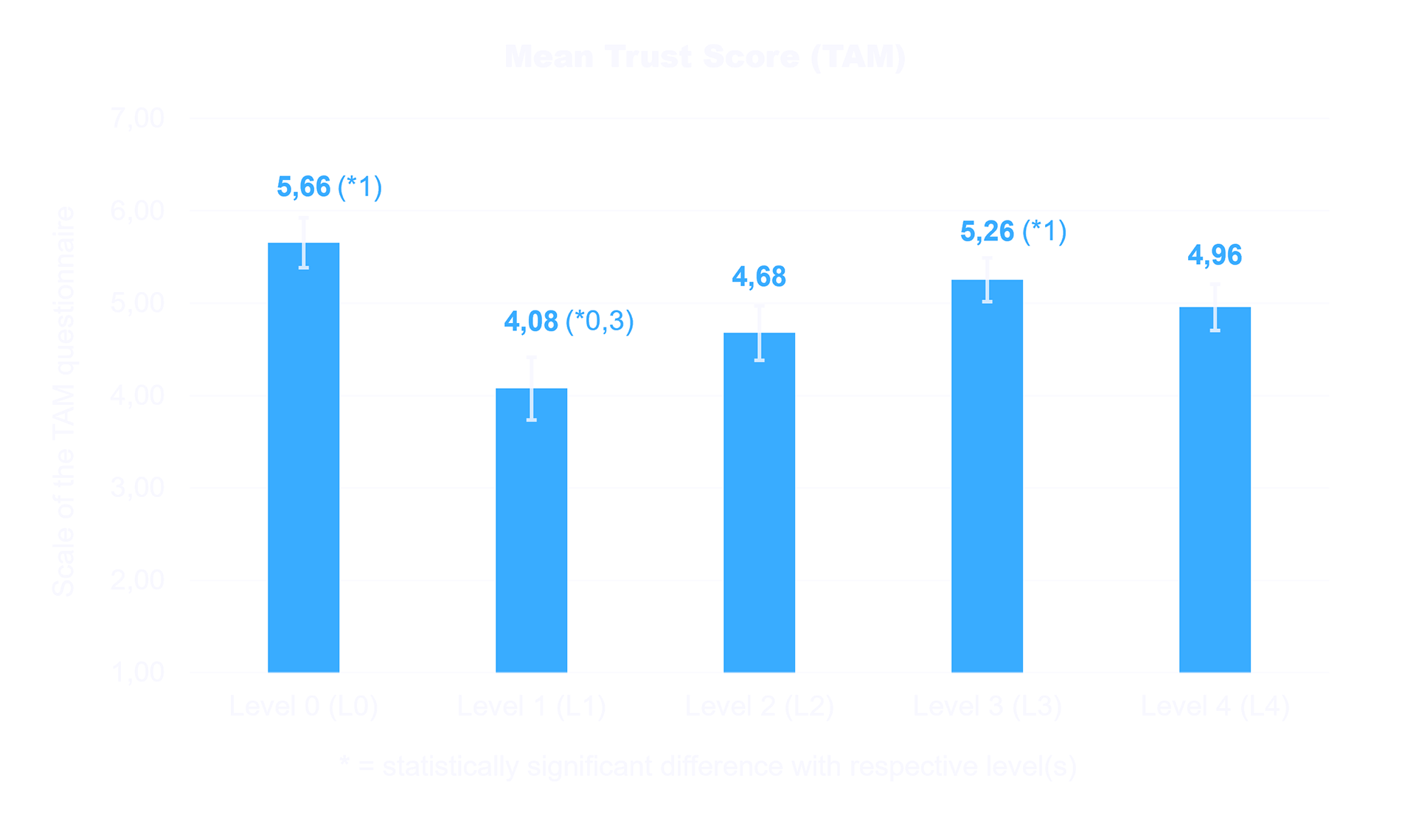

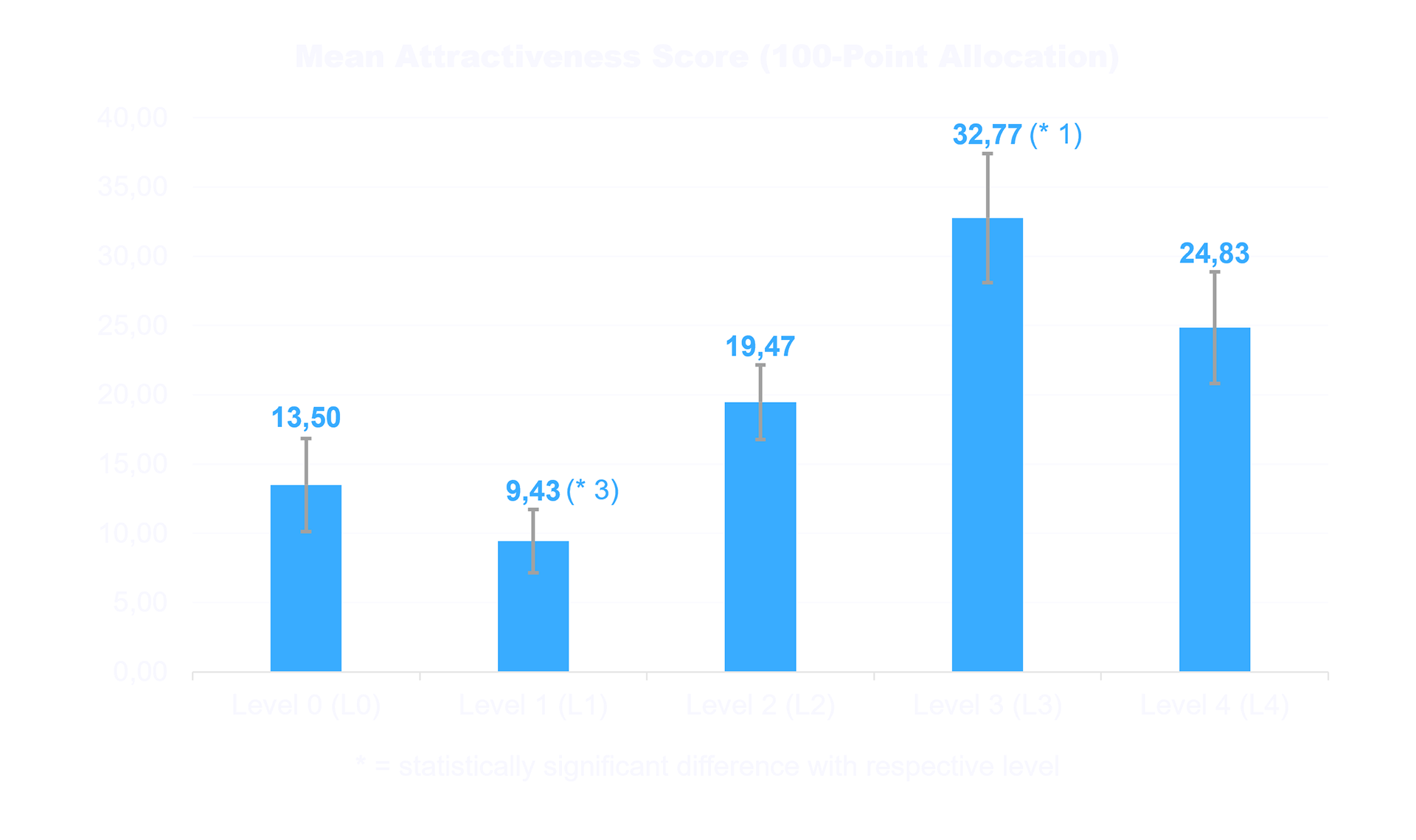

The findings show a clear trade-off between feedback depth and efficiency. More detailed explanations improved understanding and satisfaction but increased task completion time, while unmarked suggestions led to lower trust and higher error rates.

Confidence indicators proved most effective. Representing certainty through color coding and percentage values enabled faster validation, reduced errors by 8%, and significantly increased error-free task completion. Trust remained high when users had clear signals and dropped when suggestions lacked context.

Mean Personality Characteristics (BFI-S)

Mean Task Completion Time (s)

Mean Error Rate (Count and %)

Mean UX Quality (UEQs)

Mean Trust Score (TAM)

Mean Attractiveness Score (100-Point Allocation)

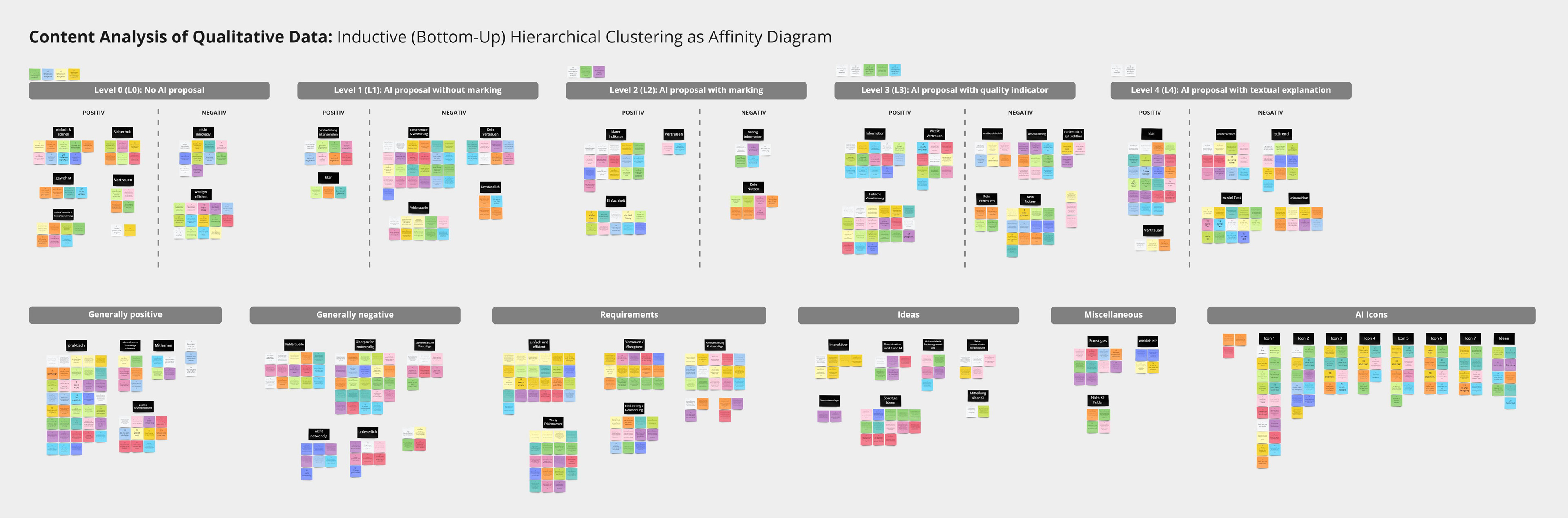

Qualitative feedback reinforced these results. Unmarked suggestions were perceived as confusing, while even subtle indicators improved clarity. Confidence-based feedback was described as intuitive and supportive, whereas textual explanations were seen as helpful but too time-consuming for everyday use.

Content analysis for the qualitative data, visualized as affinity diagram with a total of 675 codes in 64 patterns, clustered into 11 themes. Per subject a different sticky note color was used.

Conclusion

Effective intelligent interfaces are not defined by automation, but by how well they support user decisions. In high-risk contexts, users need clear, reliable signals, not more information.

Simple, scannable feedback, such as confidence indicators, proved most effective, improving accuracy and efficiency without disrupting the workflow. More detailed explanations can add value, but should remain optional.

The key is reducing complexity: even when systems are complex, interaction must stay fast and easy to interpret.For more details, see my master thesis.